MadMiner

Particle physics processes are usually modelled with complex Monte-Carlo simulations of the hard process, parton shower, and detector interactions. These simulators typically do not admit a tractable likelihood function: given a (potentially high-dimensional) set of observables, it is usually not possible to calculate the probability of these observables for some model parameters. Particle physicists usually tackle this problem of “likelihood-free inference” by hand-picking a few “good” observables or summary statistics and filling histograms of them. But this conventional approach discards the information in all other observables and often does not scale well to high-dimensional problems.

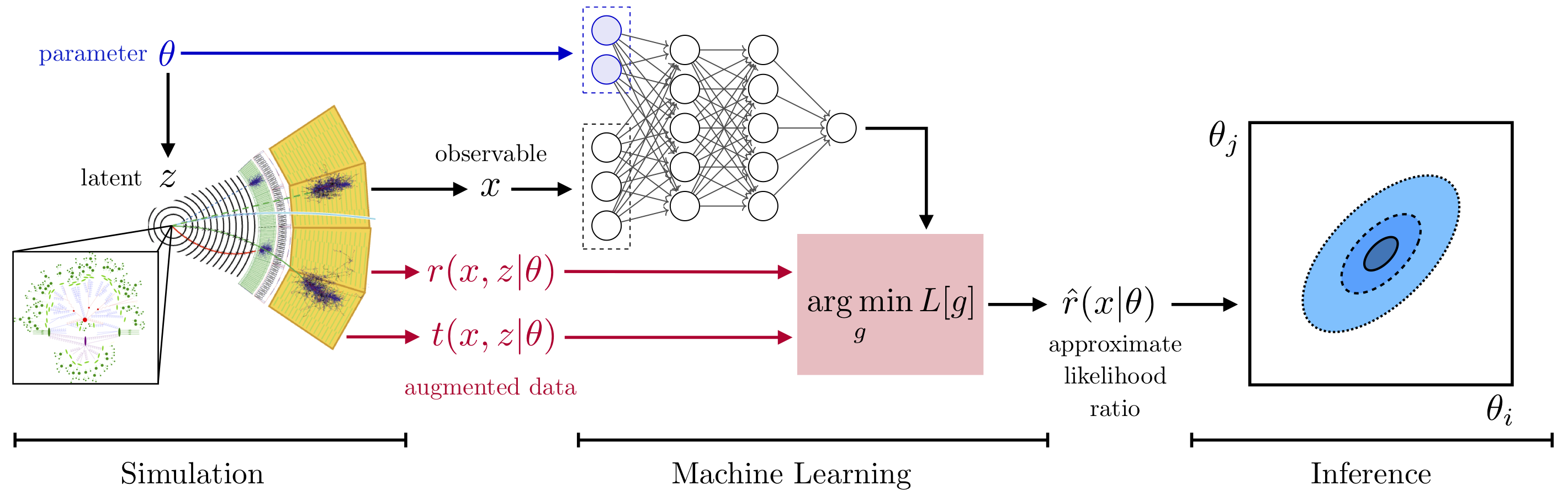

In the three publications “Constraining Effective Field Theories With Machine Learning”, “A Guide to Constraining Effective Field Theories With Machine Learning”, and “Mining gold from implicit models to improve likelihood-free inference”, a new approach has been developed. In a nut shell, additional information is extracted from the simulators. This “augmented data” can be used to train neural networks to efficiently approximate arbitrary likelihood ratios. We playfully call this process “mining gold” from the simulator, since this information may be hard to get, but turns out to be very valuable for inference.

Team

- Kyle Cranmer

- Johann Brehmer

- Irina Espejo

- Sinclert Pérez

- Felix Kling

Presentations

- 16 May 2024 - "SBI in HEP Meets Reality", Alexander Held, APS April Meeting 2024

- 3 Apr 2024 - "Evolution of Analysis Techniques and Statistical Treatment", Alexander Held, APS April Meeting 2024

- 14 Dec 2023 - "Introduction to simulation-based inference (SBI)", Alexander Held, ATLAS end-of-year physics plenary 2023

- 7 Jul 2021 - "MadMiner: a python based tool for simulation-based inference in HEP" , Kyle Cranmer, PyHEP 2021

- 23 Nov 2020 - "How machine learning can help us get the most out of high-precision particle physics models", Johann Brehmer, JLab theory seminar

- 12 Nov 2020 - "How machine learning can help us get the most out of high-precision particle physics models", Johann Brehmer, DESY-HU theory seminar

- 26 Oct 2020 - "Madminer on REANA", Sinclert Pérez, IRIS-HEP Future Analysis Systems and Facilities Blueprint Workshop

- 17 Feb 2020 - "Mining for Dark Matter substructure: Learning from lenses without a likelihood", Johann Brehmer, Dark Matter Working Group seminar

- 5 Feb 2020 - "The frontier of simulation-based inference", Johann Brehmer, Workshop on Machine Learning at the LHC

- 6 Jan 2020 - "Normalizing flows and the likelihood ratio trick in particle physics", Johann Brehmer, Deep learning seminar

- 14 Dec 2019 - "Mining gold: Improving simulation-based inference with latent information (poster)", Johann Brehmer, NeurIPS 2019 workshop on Machine Learning and the Physical Sciences

- 7 Nov 2019 - "Constraining effective field theories with machine learning", Alexander Held, 24th International Conference on Computing in High Energy & Nuclear Physics

- 19 Jun 2019 - "MadMiner Update", Johann Brehmer, Analysis Systems Topical Meeting

- 6 Jun 2019 - "Constraining effective field theories with machine learning", Johann Brehmer, INFN Padova seminar

- 18 Apr 2019 - "Constraining effective field theories with machine learning", Johann Brehmer, Higgs and Effective Field Theory 2019

- 18 Mar 2019 - "'Mining gold' from simulators to improve likelihood-free inference", Johann Brehmer, Likelihood-free inference workshop

- 14 Mar 2019 - "Keynote: Constraining effective field theories with machine learning", Johann Brehmer, International Workshop on Advanced Computing and Analysis Techniques in Physics Research

- 28 Feb 2019 - "Bringing together simulations, physics insight, and machine learning to constrain new physics", Johann Brehmer, Dark universe seminar

- 14 Jan 2019 - "Meticulous measurements with matrix elements and machine learning", Johann Brehmer, ITS/CHEP joint seminar

- 11 Jan 2019 - "Improving inference with matrix elements and machine learning", Johann Brehmer, HK IAS Program on High Energy Physics

- 20 Sep 2018 - "Learning to constrain new physics", Johann Brehmer, IPPP seminar

- 13 Sep 2018 - "Learning to constrain new physics", Johann Brehmer, Pheno & Vino Seminar

- 27 Aug 2018 - "Learning to constrain new physics", Johann Brehmer, Elementary particle seminar

- 23 Jul 2018 - "Machine Learning to Probe a BSM Higgs Sector", Johann Brehmer, Higgs Hunting

- 27 Jun 2018 - "Constraining Effective Theories with Machine Learning", Johann Brehmer, Theory seminar

Publications

- Scaling MadMiner with a deployment on REANA, I. Espejo, S. Perez, K. Hurtado, L. Heinrich and K. Cranmer, arXiv 2304.05814 (12 Apr 2023) [1 citation].

- Publishing statistical models: Getting the most out of particle physics experiments, K. Cranmer et. al., SciPost Phys. 12 037 (2022) (10 Sep 2021) [53 citations] [NSF PAR].

- Constraining effective field theories with machine learning, J. Brehmer, K. Cranmer, I. Espejo, A. Held, F. Kling, G. Louppe and J. Pavez, EPJ Web Conf. 245 06026 (2020) (16 Nov 2020) [3 citations] [NSF PAR].

- Flows for simultaneous manifold learning and density estimation, Advances in Neural Information Processing Systems 34 (NeurIPS2020) (30 Mar 2020) [28 citations].

- Mining gold from implicit models to improve likelihood-free inference, Proceedings of the National Academy of Sciences; DOI:10.1073/pnas.1915980117 (20 Feb 2020) [139 citations].

- Mining for Dark Matter Substructure: Inferring subhalo population properties from strong lenses with machine learning, The Astrophysical Journal, Volume 886, Number 1; DOI:10.3847/1538-4357/ab4c41 (19 Nov 2019) [92 citations] [NSF PAR].

- The frontier of simulation-based inference, Proceedings of the National Academy of Sciences DOI:10.1073/pnas.1912789117 (04 Nov 2019) [445 citations] [NSF PAR].

- Benchmarking simplified template cross sections in WH production, J. Brehmer, S. Dawson, S. Homiller, F. Kling and T. Plehn, JHEP 11 034 (2019) (19 Aug 2019) [59 citations].

- MadMiner: Machine learning-based inference for particle physics, J. Brehmer, F. Kling, I. Espejo and K. Cranmer, Comput.Softw.Big Sci. 4 3 (2020) (24 Jul 2019) [139 citations] [NSF PAR].

- Effective LHC measurements with matrix elements and machine learning, J. Brehmer, K. Cranmer, I. Espejo, F. Kling, G. Louppe and J. Pavez, J.Phys.Conf.Ser. 1525 012022 (2020) (04 Jun 2019) [21 citations] [NSF PAR].