The Allen application is a fully GPU-based implementation of the first level trigger for the upgrade of the LHCb detector, due to start data taking in 2022. Allen is the first complete high-throughput GPU trigger proposed for a HEP experiment.

IRIS-HEP has been directly supporting vital development work required to monitor the performance of Allen in real time, and to check the quality of the Run 3 LHCb data once the next LHC run beings. Furthermore, IRIS-HEP members have also been involved in almost all aspects of Allen development.

By the end of 2019, we demonstrated that Allen can process the 40 Tbit/s data rate of the upgraded LHCb detector and perform a wide variety of pattern recognition tasks. These include finding the trajectories of charged particles, finding proton–proton collision points, identifying particles as hadrons or muons, and finding the displaced decay vertices of long-lived particles. We further demonstrated that Allen can be implemented in around 500 scientific or consumer GPU cards, that it is not I/O bound, and that it can be operated at the full LHC collision rate of 30 MHz.

By late spring 2020, we had demonstrated that Allen was a fully viable production candidate for the LHCb trigger system. The work done by IRIS-HEP members was critical during the Allen review at CERN.

In June 2020, the LHCb collaboration officially adopted Allen as the baseline choice for the Run 3 trigger. See, e.g., the following press releases:

- Graphics cards farm to help in search of new physics at LHCb

- Allen initiative – supported by CERN openlab – key to LHCb trigger upgrade

- CERN openlab: the use of GPUs for parallel computing within the LHCb experiment

In 2022, IRIS-HEP members worked to make Allen fully production ready for deployment, successfully processing the full data rate in real time at the maximum collision rate that year, roughly 20 MHz:

- LHCb’s unique approach to real-time data processing

- LHCb begins using unique approach to process collision data in real-time

IRIS-HEP members are now working to prepare and deploy the Allen version that will process data at the nominal 40 MHz rate in 2023. Leading our efforts is Kate Richardson, who is lead developer of the Allen monitoring framework and one of several rotating on-call experts for the LHCb real-time processing system.

The Allen software is available open source, and able to run without linking to LHCb software, on gitlab.

Monitoring

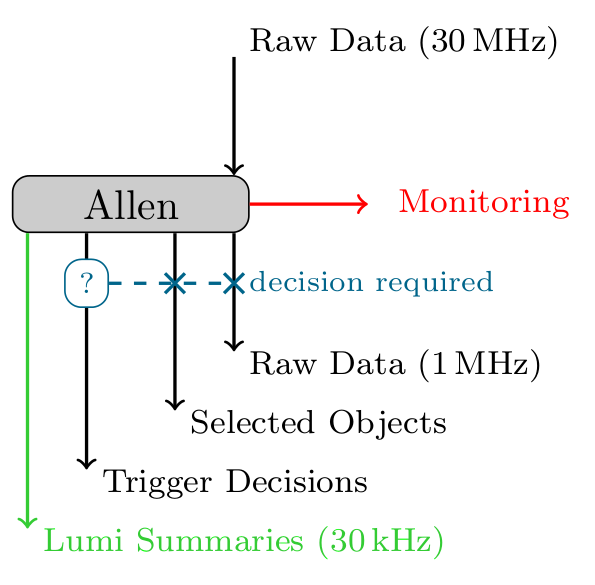

It is necessary for data quality purposes to monitor the acceptance rates of trigger lines as well as features of reconstructed tracks and vertices live during data taking. The primary output channel of Allen (depicted downwards in the diagram below) is to attach additional data banks to the input data stream. However, this is only propagated to the second level trigger if the event passes a trigger selection. Additionally, these data banks are not available in real time. The monitoring system serves a selection of histograms and counters directly to an external monitoring interface.

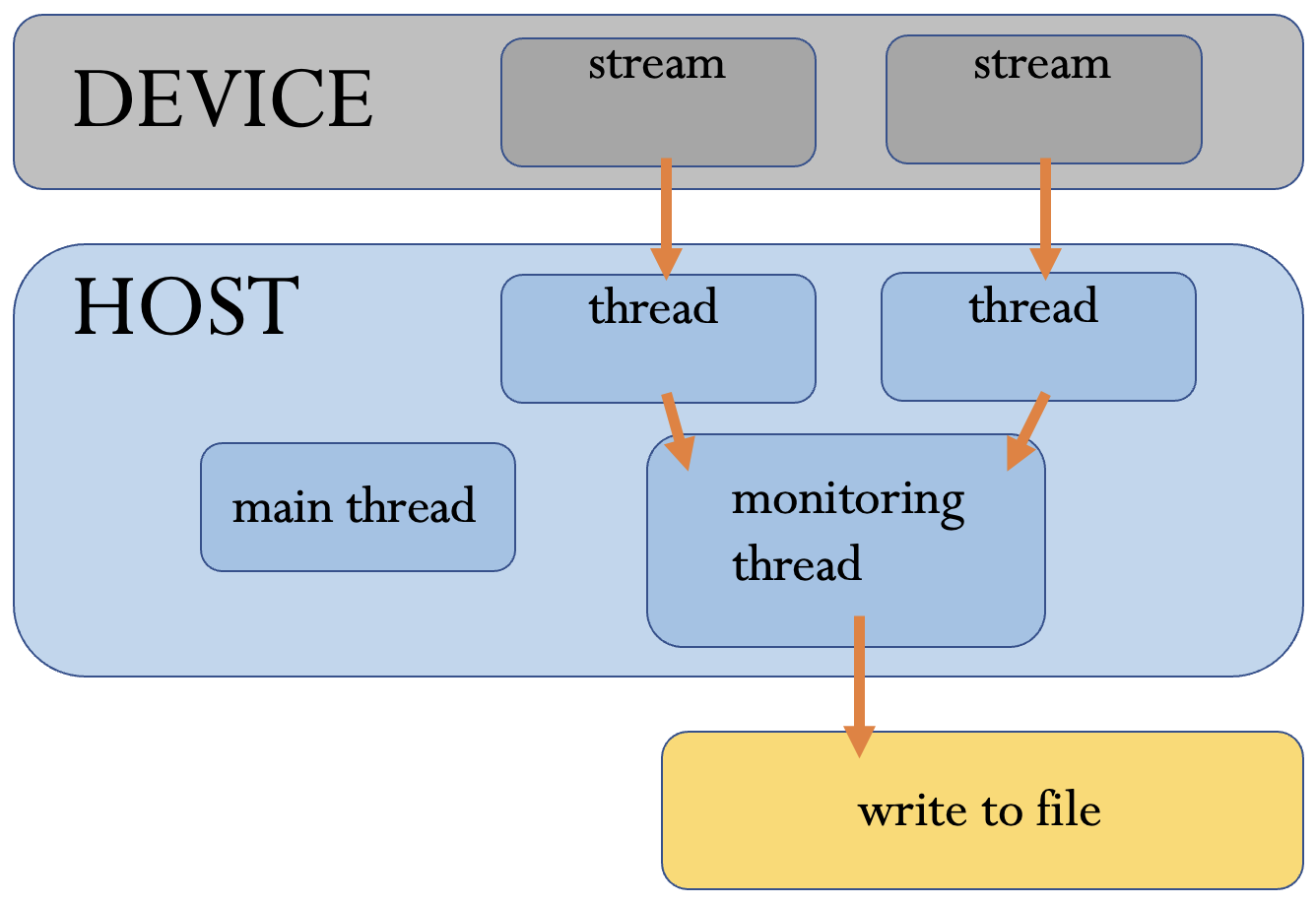

For the demonstration of Allen, monitoring histograms were populated in a dedicated monitoring thread using information from the output data banks as well as additional data copied back to the host specifically for monitoring purposes. The flow of monitoring-related data as it existed in the demonstration version is shown in the diagram below.

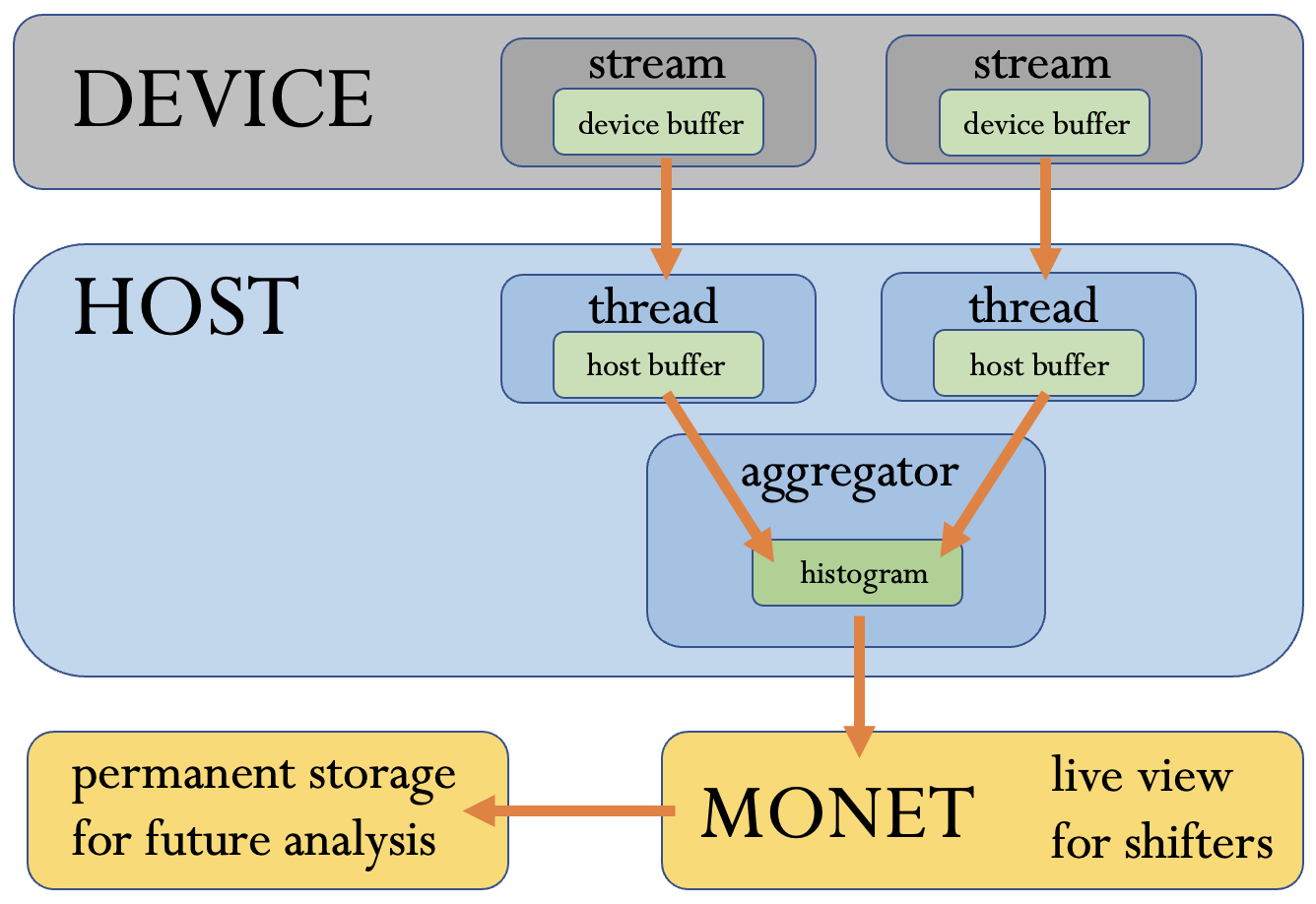

For the production version of Allen, we have now moved the monitoring accumulation from the dedicated thread into the threads responsible for running the Allen trigger pipeline. This allows any algorithm in the sequence to define and populate monitoring counters and histograms and also opens up the possibility of monitoring features of events that are not selected by the trigger (including possibly performing histogram-level analyses without triggering at all). For GPU algorithms, this is achieved in two ways. Quantities that are copied to the host memory for other reasons (e.g. line decisions) can be used to directly populate histograms in the host memory. Quantities that are never copied to the host are used to calculate bin contents on the GPU device. The bin contents are then copied to the host to be added to the histogram, reducing the amount of data that must be copied to the host. Histograms are served to an external monitoring display using the Gaudi MonitoringHub interface. An additional monitoring hub is also used within the aggregation thread, which combines histograms from separate GPU streams (which use independent host memory). The current flow of monitoring data is shown in the diagram below.

Team

- Mike Williams

- Kate Richardson

- Mike Sokoloff

Presentations

- 27 Apr 2022 - "Allen monitoring", Daniel Craik, IRIS-HEP Topical meeting

- 26 Apr 2020 - "Autoencoders for Compression and Simulation in Particle Physics", Daniel Craik, International Conference on Learning Representations 2020

- 27 Feb 2020 - "Allen: a GPU trigger for LHCb", Daniel Craik, IRIS-HEP Poster Session

- 27 Jan 2020 - "The Allen Project", Daniel Craik, IRIS-HEP Topical Meeting

- 9 Dec 2019 - "The Trigger and Real-time Reconstruction at LHCb", Daniel Craik, CPAD Instrumentation Frontier Workshop 2019

- 16 Oct 2019 - "LHCb Status and Outlook", Daniel Craik, LHC Users Association Annual Meeting 2019

Publications

- Allen: A high level trigger on GPUs for LHCb, R. Aaij et. al., Comput.Softw.Big Sci. 4 7 (2020) (19 Dec 2019) [128 citations] [NSF PAR].

Recent recordings

3 May 2022