IRIS-HEP has been established to meet the software and computing challenges of the experimental particle physics community. In order to meet the challenges of the HL-LHC, IRIS-HEP researchers are engaged in various exploratory projects. Some of these emerge from previous targeted research or as a means to engage the broader scientific community. IRIS-HEP is an excellent example of use-inspired research, and the products of that research is often applicable to other domains. Similarly, IRIS-HEP is embracing the NSF theme of convergence as we must bring together developments in computer science, data science, and statistics to meet the demands of the LHC. Many of these projects have impact beyond high-energy physics

Proteins and Robotics

In collaboration with researchers at DeepMind and MIT, Kyle Cranmer use machine leaning to describe data that is restricted to certain shapes because of geometric constraints. This type of structure appears in protein structure, robotics, geology, nuclear physics, and high energy particle physics. Read the paper: aXiv:2002.02428. (Protein figure from Boomsma Boosma, PNAS.) )

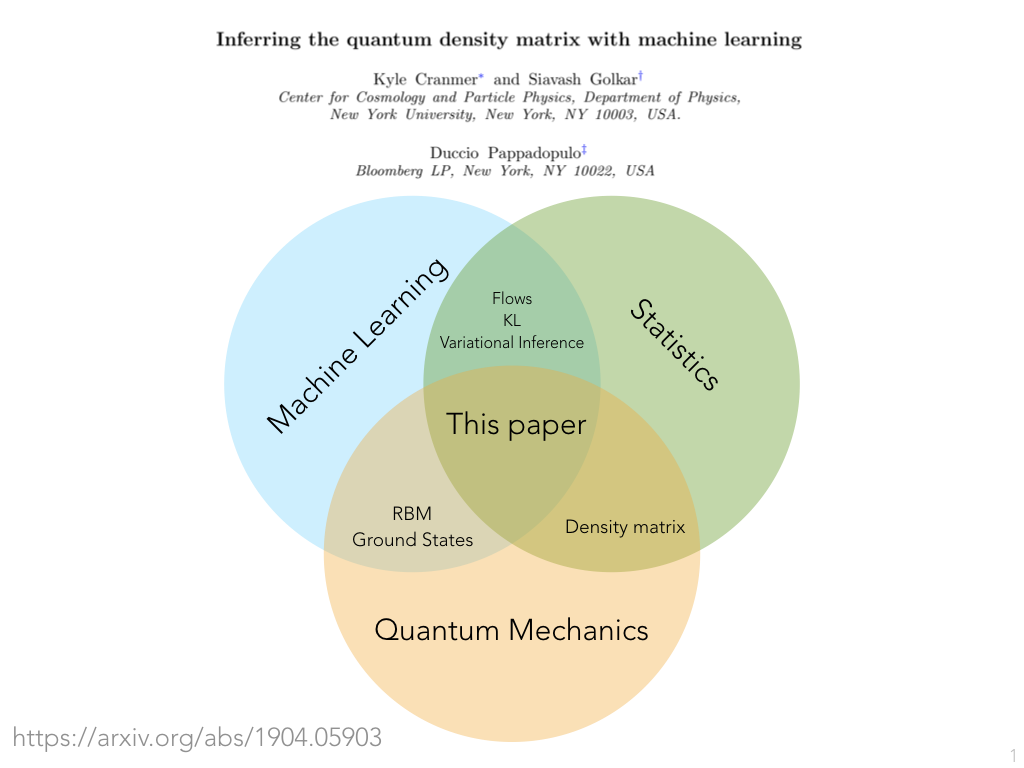

Quantum Information & Spectral Methods

Machine learning techniques are being used within IRIS-HEP to enable powerful new forms of statistical inference. Partially supported by IRIS-HEP's exploratory machine learning efforts, Kyle Cranmer and collaborators explored a generalizing those techniques from classical data to quantum systems, which resulted in this paper. The technique also has applications in spectral learning, which has a broad range of applications in signal processing, and has been cited by researchers at DeepMind that developed Spectral Inference Networks. This work was followed up for quantum information in Variational Autoregressive Networks and Quantum Circuits by researchers at the Chinese Academy of Sciences.Algorithmic Fairness, Privacy, and Causality

As machine learning becomes increasingly integrated into our modern lives, a major concern is that the outcome of an automated decision making system should not discriminate between subgroups characterized by sensitive attributes such as gender or race. This is the basis of research around "algorithmic fairness". A similar problem appears in the context of particle physics where physicists don't want the outcome to depend on an uncertain quantity. To address this problem, Gilles Louppe, Michael Kagan, and Kyle Cranmer developed a technique to train a neural network to be independent of one or more attributes. The technique has been applied to or inspired various work on algorithmic fairness including "One-Network Adversarial Fairness". The image to the left is taken from this nice blog post by Stijn Tonk. In addition, the work has inspired work by researchers at INRIA and UC Berkeley in privacy and encryption as well as research into the correlation-versus-causation dilemma.

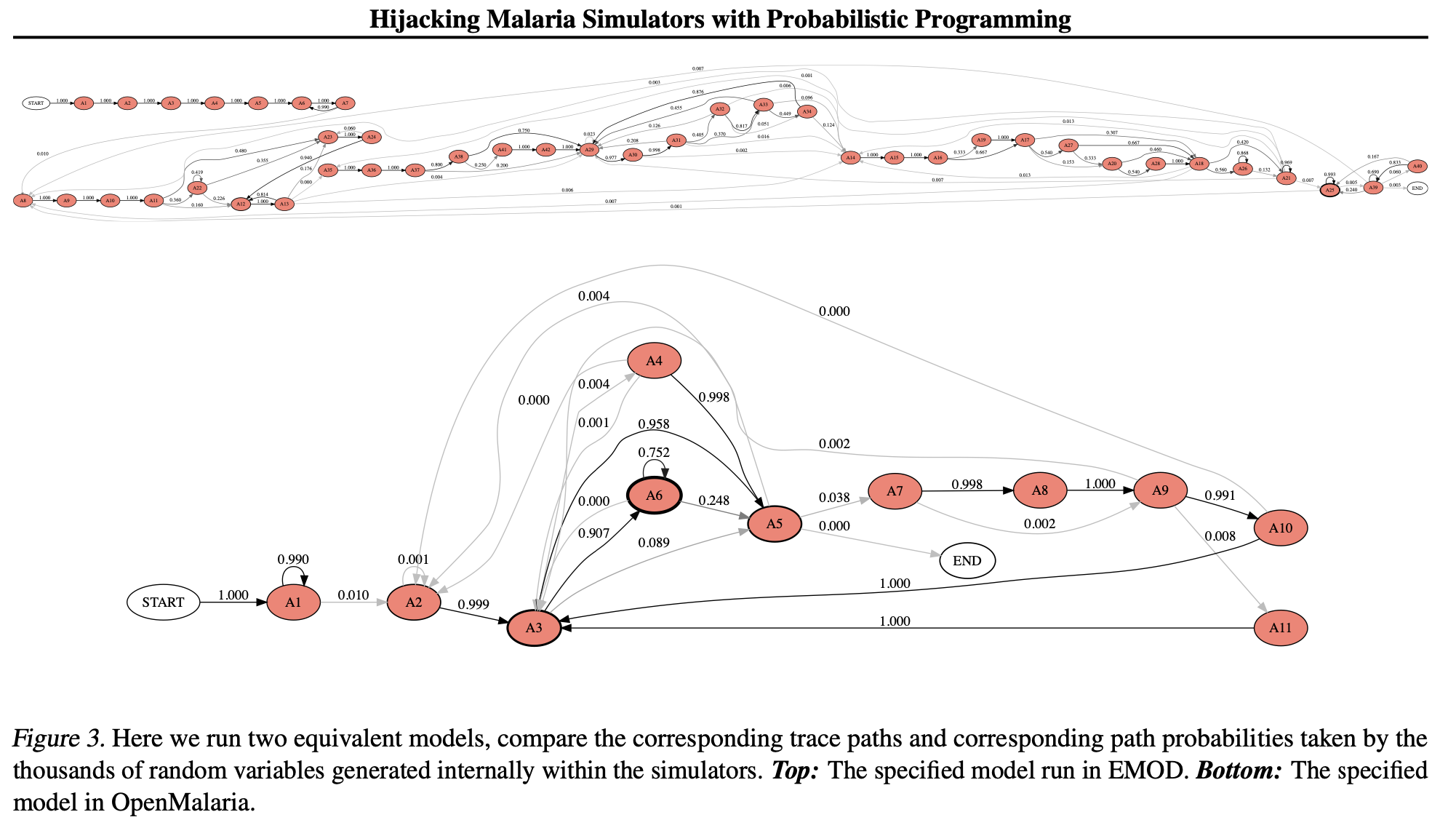

Epidemiology

IRIS-HEP researchers collaborated with computer scientists at Oxford and NERSC to instrument particle physics simulators with new capabilities. The "Etalumis" project was nominated for best paper at SC’19 (SuperComputing) and has been written about here and here. The PPX protocol and pyprob tools developed for those studies have since been applied to epidemiological studies such as “Hijacking Malaria Simulators with Probabilistic Programming”, (source of image) and are now being applied to COVID19 (see “Planning as inference in epidemiological dynamics models”.

Genomics

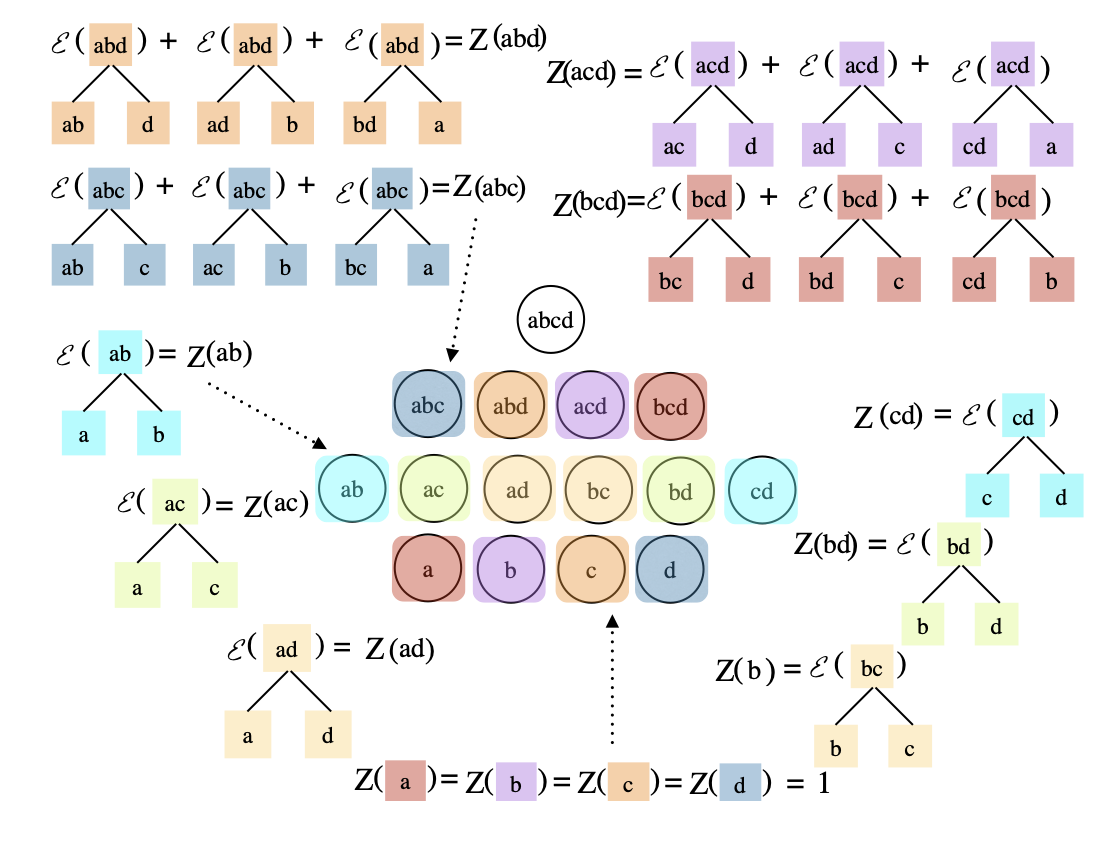

Hierarchical clustering is a common clustering approach for gene expression data. Within particle physics hierarchical clustering appears in the context of jets -- the most copiously produced objects at the Large Hadron Collider. One challenge is that the number of hierarchical clusterings grows very quickly with the number of objects being clustered. IRIS-HEP researchers Sebastian Macaluso and Kyle Cranmer connected with computer scientists at U. Mass Amherst to extend a clustering algorithm they had developed for the hierarchical case. This algorithm was applied to both particle physics and cancer genomics studies in Compact Representation of Uncertainty in Hierarchical Clustering.

Dark Matter Astrophysics

While we know dark matter exists in the universe, we still don't know what it is made of. One approach to pinning down the nature of dark matter is through astrophysics. In particular, images of galaxies that are distorted through gravitational lensing can encode subtle hints about the nature of dark matter, but extracting that information from the images is challenging. IRIS-HEP and former DIANA-HEP researchers joined astro-particle physicist Siddharth Mishra-Sharma to apply techniques originally developed for the LHC to this challenging problem in Mining for Dark Matter Substructure: Inferring Subhalo Population Properties from Strong Lenses with Machine Learning.

Dynamical Systems

As part of IRIS-HEP's exploratory machine learning efforts, we've developed collaborations with researchers at DeepMind that are interested in modeling physical systems. This research involves finding ways to incorporate various types of domain knowledge into neural networks. For instance, we know many systems are composed of more basic ingredients, or that interactions between those ingredients have some relational structure. Kyle Cranmer joined researchers at DeepMind for work that brought together techniques from physics and neural networks in Hamiltonian Graph Networks with ODE Integrators. This work has been extended with fantastic results (see right) on complex simulations of particle systems in Learning to Simulate Complex Physics with Graph Networks.Team

- Kyle Cranmer

- Sebastian Macaluso

- Philip Harris

- Paolo Calafiura

- Mark Neubauer

- Lauren Tompkins

- Johann Brehmer

- Irina Espejo

- Mike Williams

Publications

- LHC EFT WG Report: Experimental Measurements and Observables, N. Castro, K. Cranmer, A. Gritsan, J. Howarth, G. Magni, K. Mimasu, J. Rojo, J. Roskes, E. Vryonidou and T. You, arXiv 2211.08353 (15 Nov 2022) [20 citations].

- Explainable AI for High Energy Physics, M. Neubauer and A. Roy, arXiv 2206.06632 (14 Jun 2022) [8 citations].

- Jets and Jet Substructure at Future Colliders, J. Bonilla et. al., Front.in Phys. 10 897719 (2022) (14 Mar 2022) [26 citations].

- Flow-based sampling in the lattice Schwinger model at criticality, M. Albergo, D. Boyda, K. Cranmer, D. Hackett, G. Kanwar, S. Racanière, D. Rezende, F. Romero-López, P. Shanahan and J. Urban, Phys.Rev.D 106 014514 (2022) (23 Feb 2022) [51 citations].

- The Quantum Trellis: A classical algorithm for sampling the parton shower with interference effects, S. Macaluso and K. Cranmer, arXiv 2112.12795 (23 Dec 2021) [3 citations].

- Simulation Intelligence: Towards a New Generation of Scientific Methods, A. Lavin et. al., arXiv 2112.03235 (06 Dec 2021) [3 citations].

- Neural simulation-based inference approach for characterizing the Galactic Center γ-ray excess, S. Mishra-Sharma and K. Cranmer, Phys.Rev.D 105 063017 (2022) (13 Oct 2021) [57 citations].

- Flow-based sampling for multimodal and extended-mode distributions in lattice field theory, D. Hackett, C. Hsieh, S. Pontula, M. Albergo, D. Boyda, J. Chen, K. Chen, K. Cranmer, G. Kanwar and P. Shanahan, arXiv 2107.00734 (01 Jul 2021) [62 citations].

- Flow-based sampling for fermionic lattice field theories, M. Albergo, G. Kanwar, S. Racanière, D. Rezende, J. Urban, D. Boyda, K. Cranmer, D. Hackett and P. Shanahan, Phys.Rev.D 104 114507 (2021) (10 Jun 2021) [66 citations].

- Reframing Jet Physics with New Computational Methods, The 25th International Conference on Computing in High-Energy and Nuclear Physics, vCHEP 2021. (21 May 2021) [11 citations] [NSF PAR].

- Exact and Approximate Hierarchical Clustering Using A*, The Conference on Uncertainty in Artificial Intelligence (UAI) 2021. (14 Apr 2021).

- An intelligent Data Delivery Service for and beyond the ATLAS experiment, W. Guan, T. Maeno, B. Bockelman, T. Wenaus, F. Lin, S. Padolski, R. Zhang and A. Alekseev, EPJ Web Conf. 251 02007 (2021) (28 Feb 2021) [9 citations] [NSF PAR].

- Deep Search for Decaying Dark Matter with XMM-Newton Blank-Sky Observations, J. Foster, M. Kongsore, C. Dessert, Y. Park, N. Rodd, K. Cranmer and B. Safdi, Phys.Rev.Lett. 127 051101 (2021) (03 Feb 2021) [180 citations].

- Introduction to Normalizing Flows for Lattice Field Theory, M. Albergo, D. Boyda, D. Hackett, G. Kanwar, K. Cranmer, S. Racanière, D. Rezende and P. Shanahan, arXiv 2101.08176 (20 Jan 2021) [79 citations].

- Semi-parametric gamma-ray modeling with Gaussian processes and variational inference, S. Mishra-Sharma and K. Cranmer, arXiv 2010.10450 (20 Oct 2020) [14 citations].

- Simulation-based inference methods for particle physics, J. Brehmer and K. Cranmer, arXiv 2010.06439 (13 Oct 2020) [30 citations].

- Sampling using SU(N) gauge equivariant flows, D. Boyda, G. Kanwar, S. Racanière, D. Rezende, M. Albergo, K. Cranmer, D. Hackett and P. Shanahan, Phys.Rev.D 103 074504 (2021) (12 Aug 2020) [150 citations] [NSF PAR].

- Secondary vertex finding in jets with neural networks, J. Shlomi, S. Ganguly, E. Gross, K. Cranmer, Y. Lipman, H. Serviansky, H. Maron and N. Segol, Eur.Phys.J.C 81 540 (2021) (06 Aug 2020) [44 citations] [NSF PAR].

- Discovering Symbolic Models from Deep Learning with Inductive Biases, M. Cranmer, A. Sanchez-Gonzalez, P. Battaglia, R. Xu, K. Cranmer, D. Spergel and S. Ho, arXiv 2006.11287 (19 Jun 2020) [76 citations].

- Flows for simultaneous manifold learning and density estimation, Advances in Neural Information Processing Systems 34 (NeurIPS2020) (30 Mar 2020) [28 citations].

- Equivariant flow-based sampling for lattice gauge theory, G. Kanwar, M. Albergo, D. Boyda, K. Cranmer, D. Hackett, S. Racanière, D. Rezende and P. Shanahan, Phys.Rev.Lett. 125 121601 (2020) (13 Mar 2020) [228 citations] [NSF PAR].

- Data Structures & Algorithms for Exact Inference in Hierarchical Clustering, The 24th International Conference on Artificial Intelligence and Statistics, PMLR 130:2467-2475, 2021. (26 Feb 2020) [3 citations].

- Set2Graph: Learning Graphs From Sets, Advances in Neural Information Processing Systems 34 (NeurIPS2020) (20 Feb 2020) [15 citations].

- Mining gold from implicit models to improve likelihood-free inference, Proceedings of the National Academy of Sciences; DOI:10.1073/pnas.1915980117 (20 Feb 2020) [139 citations].

- Normalizing Flows on Tori and Spheres, Thirty-seventh International Conference on Machine Learning (06 Feb 2020) [31 citations].

- Mining for Dark Matter Substructure: Inferring subhalo population properties from strong lenses with machine learning, The Astrophysical Journal, Volume 886, Number 1; DOI:10.3847/1538-4357/ab4c41 (19 Nov 2019) [92 citations] [NSF PAR].

- The frontier of simulation-based inference, Proceedings of the National Academy of Sciences DOI:10.1073/pnas.1912789117 (04 Nov 2019) [458 citations] [NSF PAR].

- Hamiltonian Graph Networks with ODE Integrators, A. Sanchez-Gonzalez, V. Bapst, K. Cranmer and P. Battaglia, arXiv 1909.12790 (Submitted to Machine Learning For the Physical Sciences NeurIPS2019 Workshop) (27 Sep 2019) [11 citations].

- Etalumis: bringing probabilistic programming to scientific simulators at scale, Proceedings of the International Conference for High Performance Computing, Networking, Storage, and Analysis (SC19), November 17--22, 2019 DOI:10.1145/3295500.3356180 (07 Jul 2019) [15 citations] [NSF PAR].

- A hybrid deep learning approach to vertexing, R. Fang, H. Schreiner, M. Sokoloff, C. Weisser and M. Williams, J.Phys.Conf.Ser. 1525 012079 (2020) (19 Jun 2019) [6 citations] [NSF PAR].

- FPGA-accelerated machine learning inference as a service for particle physics computing, J. Duarte et. al., Comput.Softw.Big Sci. 3 13 (2019) (18 Apr 2019) [36 citations] [NSF PAR].

- Machine learning and the physical sciences, G. Carleo, I. Cirac, K. Cranmer, L. Daudet, M. Schuld, N. Tishby, L. Vogt-Maranto and L. Zdeborová, Rev.Mod.Phys. 91 045002 (2019) (25 Mar 2019) [1077 citations] [NSF PAR].

- The Machine Learning landscape of top taggers, G. Kasieczka et. al., SciPost Phys. 7 014 (2019) (26 Feb 2019) [280 citations] [NSF PAR].

- Efficient Probabilistic Inference in the Quest for Physics Beyond the Standard Model, Advances in Neural Information Processing Systems 33 (NeurIPS) (20 Jul 2018) [8 citations].

- Machine Learning in High Energy Physics Community White Paper, K. Albertsson et. al., J.Phys.Conf.Ser. 1085 022008 (2018) (08 Jul 2018) [305 citations].

- Adversarial Variational Optimization of Non-Differentiable Simulators, PMLR 89:1438-1447, 2019 (22 Jul 2017) [11 citations].

- QCD-Aware Recursive Neural Networks for Jet Physics, G. Louppe, K. Cho, C. Becot and K. Cranmer, JHEP 01 057 (2019) (02 Feb 2017) [211 citations].